All you need to do is read the paper or watch the news to realize that the world is becoming more difficult to understand than ever before. For instance, is the U. S. policy in Iraq achieving its intended results? Why is the stock market rising? When will our healthcare system be able to continue protecting us from health crises when more and more people are finding it difficult to receive medical treatment due to rising health costs? In response to such enormous complexity, the thoughtful observer will likely have more questions than answers! Even relatively small social systems, such as business organizations, face so many problems and choices that it’s hard to know where to start. Should we build our CRM (customer relationship management) capacity

RIGOR VS. SUPPORT

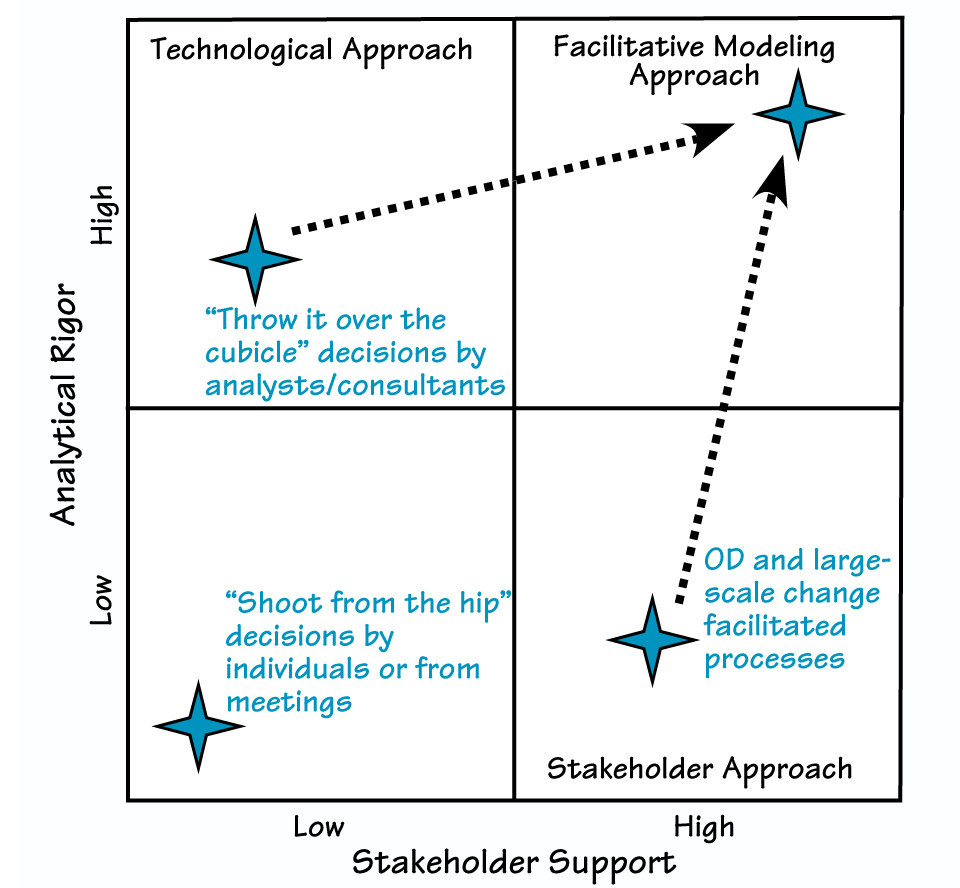

The Facilitative Modeling approach for making important decisions combines high levels of analytical rigor with high levels of stakeholder support.

Common Decision-Making Approaches

Because of this level of complexity in all aspects of organizational life, organizations usually rely on what I refer to as the “shoot from-the-hip” approach for making important decisions. You’ve seen this technique if you’ve ever been in a team meeting in which a decision must be made today. Some members of the group toss out their ideas; most participants stay silent. Eventually, the team leader contributes his or her opinion, and everyone agrees. Decision made! Most meeting participants later bemoan the “poor” decision, claiming they won’t support it. The result? The new policy dies on the vine prior to implementation, leaving the organization the same as it was before.

In analyzing “shoot-from-the-hip” decisions, we observe that they lack strength in at least two major areas: analytical rigor and stakeholder support (see “Rigor vs. Support”). This isn’t a novel observation: Organizations have struggled with these two shortcomings for years and have devised various ways to overcome them.

1. The Technological Approach Before making a major decision, in order to increase the level of analytical rigor (or understanding of the issues), managers often rely on analysts and their toolkit — what I call the Technological Approach. Organizations adopting the Technological Approach generally do so because they’ve fallen victim to the mindset that they must find the perfect answer. The idea is that if you throw enough analysis at an issue, you can completely understand everything and uncover an ideal solution. These organizations think the answer must be found in the numbers.

To process the data they generate, organizations subscribing to the Technological Approach employ spreadsheets and statistical techniques. Some even build large simulation models to test nearly infinite possible scenarios. However, these tools can obscure the assumptions underlying the analysis. And because decision-makers aren’t privy to these hidden assumptions, they cannot compare them to their own mental models — so they do not trust the resulting recommendations. This lack of trust in the analysis is a major factor in why, although usually carefully applied, the Technological Approach rarely generates the support needed to lead to effective policy-making.

2. The Stakeholder Approach In contrast, proponents of a Stakeholder Approach often put technology aside and instead try to build knowledge and support through stakeholder involvement. Well-known techniques that follow this approach include Future Search, Open Space Technology, the World Café, various forms of dialogue — even some facilitated mapping sessions using causal loop diagrams and systems archetypes. These methodologies share an underlying mindset — by getting representation from different players in “the system,” everyone will gain a broader view of the problem at hand. Further, by allowing participants to express divergent perspectives in an unconstrained fashion, the Stakeholder Approach lets them formulate creative, systemic recommendations.

Whether trying to define the problem or to generate solutions, people applying these processes (if only implicitly) tend to follow a model of interaction described by Interaction Associates as the Open-Narrow-Close model. During the Open phase, participants get all of the data on the table while defining the problem; if they’re generating solutions, this is the stage in which creative solutions spring forth from the group’s collective wisdom. During the Narrow phase, contributors take an overwhelming list of choices (problems or solutions) and narrow them down to a few to consider further. During the Close phase, they actually choose which problems to tackle or solutions to implement and how to do so. Managers then often assign groups to each of the major action items identified during this stage and give them their blessing to “go forth and implement.”

The Stakeholder Approach includes processes that build broad support — unlike what often occurs in the Technological Approach. Plus, it helps those involved to see the system from a broad spatial and sometimes temporal perspective. These results are necessary and important for creating effective changes in any system.

A major weakness of the Stakeholder Approach, however, is that the processes used to narrow and choose

APPROACHES FOR IMPLEMENTING SYSTEMS THINKING

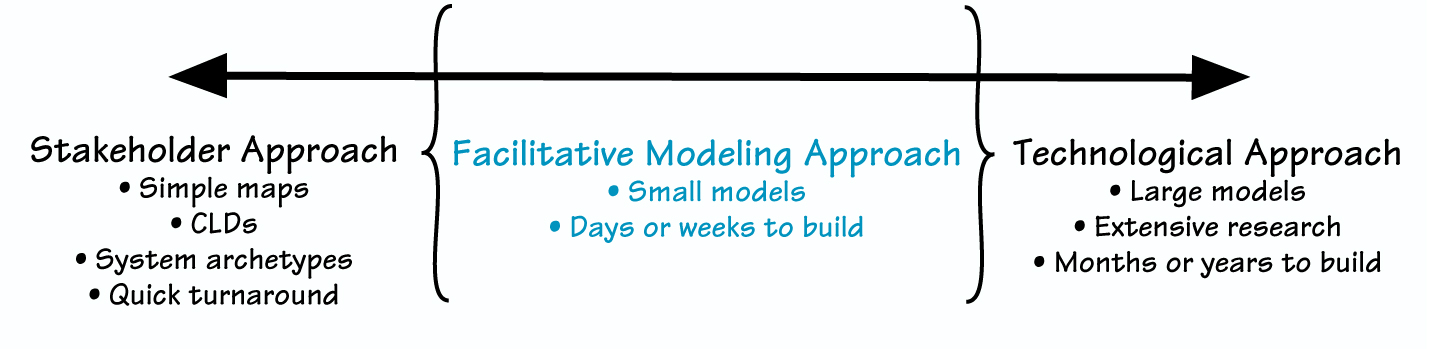

Facilitative Modeling serves as a middle ground between the Technological Approach and the Stakeholder Approach.

among the resulting divergent issues/strategies lack rigor and usually

rely on the assumption that, simply by having enough stakeholder representation, the group will make excellent decisions. But as Irving Janis learned by studying extremely poor decisions (such as the Bay of Pigs fiasco and the escalation of the Vietnam War, which he described in his book Groupthink), groups with very high average IQs can function well below expectations.

Barry Richmond of High Performance Systems, Inc. created a simple example called the Rookie-Pro exercise that also illustrates this point. Despite working with a much simpler human. resource system than that found in most organizations, only 10 to 15 percent of individuals can guess the system’s future behavior — even after lengthy discussion! So the assumption that the collective wisdom of the group will surface in a way that leads to optimal decision-making is tenuous at best.

In addition, the framework employed to guide team members in narrowing and choosing among different options doesn’t help to determine if elements of the proposed solutions need to be implemented at different times and in varying degrees. The result is that the organization often chooses to put the same amount of resources and effort into each action item. Nor does the Stakeholder Approach determine if the issues are interconnected — different groups may be separately implementing policies that should be done together or, even worse, are mutually exclusive.

Facilitative Modeling

The good news is that there is a way to both rigorously understand (or

even reduce) complexity and improve stakeholder support! Practitioners are often drawn to the field of systems thinking because of its promise to build collective understanding — to get everyone on the same page. Even so, these managers can be pulled between the Technological Approach (big simulation models created by experts) or the Stakeholder Approach (facilitated sessions using causal loop diagrams or systems archetypes). But there’s a middle ground — a large range of activities that I refer to as “Facilitative Modeling” — where tremendous power resides (see “Approaches for Implementing Systems Thinking”).

Facilitative Modeling is a Technological Approach, because it uses computer simulation and the scientific method to build understanding. It is also a Stakeholder Approach, because it requires the input of the important stakeholder groups, uses a common language so everyone can get on the same page, and creates small, simple, and easy-to-understand models. The models don’t generate the answer; rather they facilitate rigorous discussion. Facilitative Modeling usually culminates in a facilitated multi-stake-holder session in which the participants generate common understanding and make well-informed decisions.

Overview of the Process

In the Facilitative Modeling process, a group of stakeholders identifies and addresses an issue critical to their collective success. The issue is often one that has been resistant to organizational efforts to “fix” it. After choosing the area for exploration, the group sets the agenda for a facilitate

session. In preparation for that meeting, several individuals in the group serve as a modeling team and develop (alone or working with a modeler) a series of simple systems thinking simulation models that clearly articulate important components of the issue. These components may include the historical trend for that issue, the future implications if the trend continues, possible interventions, and the unintended consequences of some of these solutions. The models are deliberately kept small so that stakeholders will understand them and the development process remains manageable.

However, it’s not enough just to make models! In fact, building useful models is probably less than half of what makes a Facilitative Modeling initiative successful. The process requires the modeling team and perhaps others to create additional materials for the facilitated session, such as workbooks for tracking experiments and writing reflections, as well as CDs of the models for after the session. A facilitator and/or design team needs to carefully plan various aspects of the session, such as appropriate questions, suggested experiments to run on the model, and a mix of small and large group discussion.

The facilitated session represents the culmination of the process. During the gathering, teams of two to four people explore the models on computers. The session includes large group interludes and debriefs between exercises. And at the end of the session, participants discuss and agree on

THE FACILITATIVE MODELING PROCESS

A Facilitative Modeling Process contains the following major steps:

- Identify an issue of importance

- Determine stakeholders who have impact on/from the issue

- Use stakeholders to redefine the issue (either individually or collectively)

- Develop an agenda for a facilitated session

- Develop (usually more than one) model that surfaces important aspects of the issue

- Develop supporting materials

- Participate in a session using the models as tools for helping stakeholders explore, experiment with, and discuss the issues

- Use insights from the models and discussion to determine action items and next steps

next steps based on the insights that emerged during the event (see “The Facilitative Modeling Process”).

Facilitative Modeling in Action

Using the Facilitative Modeling Process outlined above, a nonprofit organization recently explored potential issues associated with implementing new funding policies. This organization was responsible for improving the health and welfare of the poor population in a community by giving funds to other local nonprofits to provide services. Originally, the organization had determined which organizations to fund and how much funding to supply by analyzing the services that the target organization would provide; in recent years, it had settled into just increasing the amount of funding incrementally over the previous year’s figure. To create more accountability among the local organizations and improve outcomes in the community, the nonprofit had decided to apply a performance driven approach to funding (that is, base funding on projected improvements to performance indicators and then renew the funding if the community experienced noticeable improvement in those areas).

Some members of the organization, as well as members of an important partner group, were concerned about the potential barriers to implementing this updated approach and were eager to understand possible unintended consequences that might result from the change. They agreed that a Facilitative Modeling approach would be an excellent way to surface and discuss these issues in a way that would give all stakeholders shared insight. In little more than five days of working with a facilitator and a few representatives from the organization and its partner, the team developed three small “conversational” models for a one-day facilitated session.

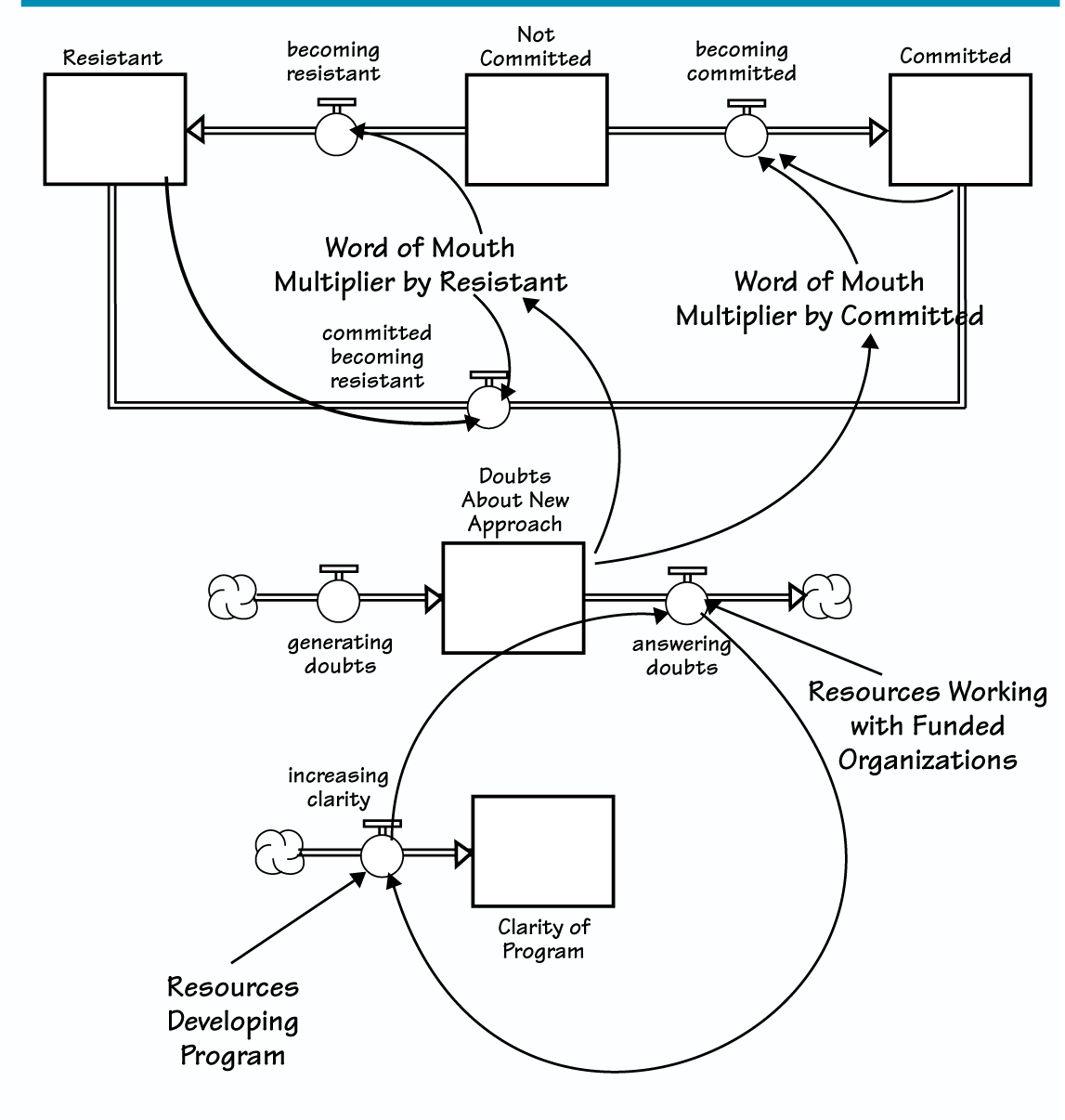

At the beginning of the session, the group adopted a set of ground rules to guide their interactions. Once participants agreed to the guidelines, they began by experimenting with the first model. The purpose of this initial simulation was to surface and discuss the potential dynamics associated with implementing the new funding approach. Allowing “sub groups” to work with the models at their own speed often increases their level of understanding. However, even those with some skill at reading stock and flow diagrams similar to the one shown here can be quickly overwhelmed by maps. The simulation included a function that let the sub groups slowly unfurl pieces of the map so that they more easily followed its logic (see “The First Map” on p. 5).

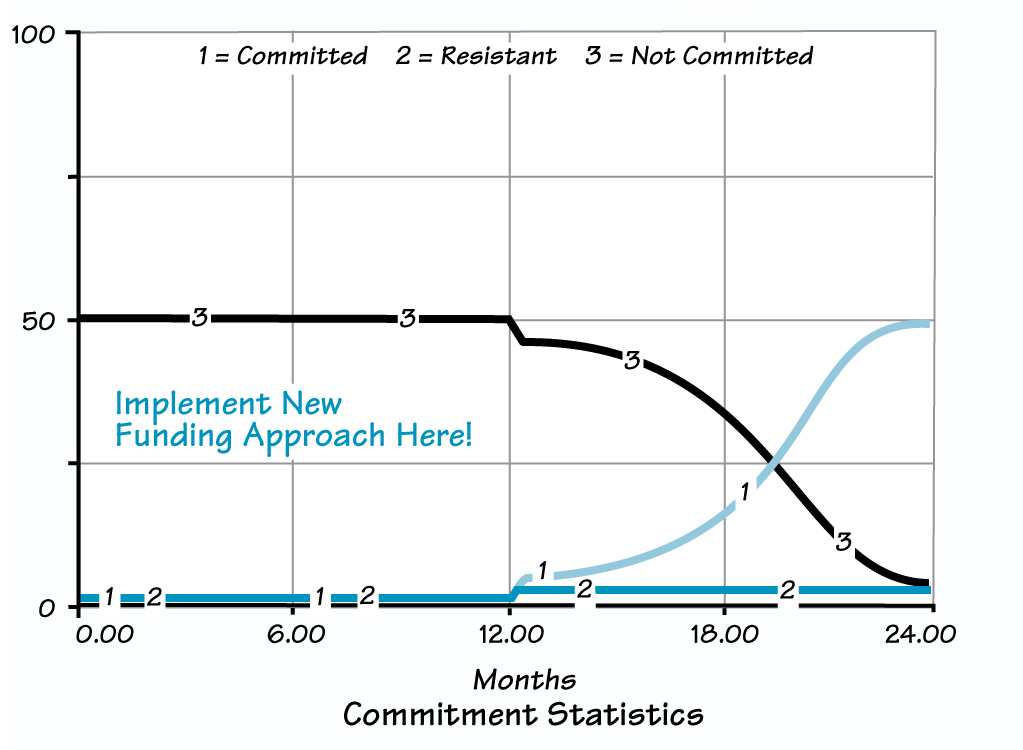

The map shown here represents one way to look at the different organizations affected by the nonprofit’s funding decisions. The language of stocks and flows is ideally suited for looking at this issue. The three stocks at the top of the diagram (the rectangles labeled “Resistant,” “Not Committed,” and “Committed”) represent groups of organizations. Currently, because the new approach has yet to be implemented, all organizations would belong in the “Not Committed” stock. Eventually, as the new funding approach is made into policy, organizations would begin to move into the “Committed” or “Resistant” stocks. Obviously, if possible, the funding group wanted to avoid any organizations becoming “Resistant.”

At the session, the individual groups discussed the meaning of each of the stocks. What does it mean to be “Committed”? “Resistant”? They mulled over the question, What number of “Resistant” organizations would pose a problem for the program as a whole? Can “Committed” organizations become “Resistant”? Is it realistic to assume (as the model does) that “Resistant” organizations never become “Committed”?

Talking about the diagram helped he sub groups, and eventually the entire group, reach consensus about how organizations might become committed or resistant to the changed funding policies. For many of the participants, it was the first time they had discussed the potential that some of their client organizations might resist the changes! By working with the model, the group was able to surface an unpleasant concept in a way that allowed them to grapple with its implications for their changed strategy.

They then entered different values into the model to experiment with how the funding organization might allocate its resources in the coming months. How much effort should they put into developing the performance-driven funding program? How much into explaining the program to the funded organizations? And how much of each should they do prior to officially announcing the program? After announcing it? In short, the group wrestled with the systemic or “chestration” (a concept developed by Barry Richmond) of resources the magnitude and timing of efforts required to successfully implement the strategy.

The group concluded that, in the first phase of development, they should apply most of their efforts to designing the new policy. Doing so builds the “Clarity of the Program,” which is useful in preventing “Doubts About the New Approach” down the road. They realized that they would need to allocate at least some resources in the first phase to working with the client groups and addressing their doubts about the change. This process would also help them to refine the approach (see “Implementation Timetable” on page 6). The next phase would require additional work with the other stakeholder groups to explain the program prior to release. The third and fourth phases would involve implementation; this is when the nonprofit’s staff members would spend most of their time addressing the doubts of the affected organizations.

The group realized that the exact numbers of organizations in each category wouldn’t be the same in real life as in the simulation, but that the stories described by the model were consistent with what they now expected might happen when overhauling their approach to funding. In keeping with the need for systemic orchestration the group concluded that their allocation of strategic resources must shift over time, depending on which phase they were in (for example, in the second phase, they would need to apply some resources to program development and even more to working with stakeholders).

Working with Subsequent Models

In Facilitative Modeling, each model tends to add to the understanding generated by previous ones. Because the performance-based funding approach would require implementing a new IT system, the second model helped participants explore how a funded organization would need to allocate resources in order to develop a new IT system and build its staff ’s capacity to use it. The third model served as the capstone exercise, because it required participants to explore how client organizations might allocate their resources across the following needs: providing services, building and maintaining the IT system, investing in staff skill development, and collaborating with partner organizations.

THE FIRST MAP

The three stocks at the top of the diagram (the rectangles labeled “Resistant,” “Not Committed,” and “Committed”) represent groups of organizations. As the new funding approach is made into policy, organizations would begin to move from the “Not Committed” stock into the “Committed” or “Resistant” stocks.

During the large-group debrief of the third model, the nonprofit’s senior director said that he didn’t like one dynamic that he experienced with the model. In all cases, after the funding change, the youth population’s sense of disconnection from the community initially worsened, even when the simulated strategies encouraged a majority of client agencies to be committed to the shift and to effectively implement performance-based approaches to providing services. When he experimented with the model, the director kept trying to avoid this “worse-before-better” dynamic. Through probing questions, the group learned that it wasn’t that he didn’t expect this behavior to happen, he just wished it wouldn’t!

IMPLEMENTATION TIMETABLE

By using the model to explore the magnitude and timing of efforts required to successfully implement the strategy, the group concluded that, in the first phase of development, they should focus on designing the new policy.

This revelation led to an interesting discussion of what is often an undiscussable in the public sector: that policies designed to improve social systems often take time before they lead to noticeable improvements and that there is often conspicuous degradation of performance in the interim. The director expressed that it was political suicide to admit that things might actually get worse before improving. Ultimately, through the facilitated discussion, he came to understand that regardless of whether he wanted to admit that such a dynamic might occur, it was inevitable, given the long delays before activities such as IT development and skill-building would have a positive effect on services. Through this admission, he and his staff were then able to explore options for mitigating the effects of this unavoidable dynamic.

Ultimately, the nonprofit’s staff left the session with useful insight in several areas. First, they all understood that some of their client organizations might resist the new approach. Second, they realized that it would be helpful for them to include those organizations in developing the program. Third, the group agreed that building staff skills was likely to be a more challenging impediment to successful implementation of the changed approach than developing the IT infrastructure. Finally, they accepted that systemwide implementation would require orchestrating a series of activities that, even in the best of circumstances, would cause a “worse-before-better” dynamic. All of these insights were just the beginnings of an ongoing dialogue, and all were facilitated by using small models to focus the conversation.

The Value of Facilitative Modeling

As shown in the example above, there is a powerful place for small models in a facilitated environment. The process used for developing good systems thinking models increases the rigor of the analysis and captures the benefits of a Technological Approach. At the same time, by keeping models small, Facilitative Modeling improves on the benefits of a Stakeholder Approach and increases the likelihood that all participants end up in alignment. Moreover, the Facilitative Modeling approach uses a language — stocks and flows — that is more representative of reality than other visual mapping languages. For this reason, the participants are able to discuss and come to a novel understanding of the assumptions built into the model. Running the simulation provides an essential test of the group’s understanding and facilitates further conversations about the likelihood of different results. The computer-generated “microworld” creates a safe environment for experimentation.

NEXT STEPS

- Read up on the value of small models, starting with the resources in the “For Further Reading” section.

- It’s unusual to find modeling and facilitation skills in the same person, so look around your organization for people who might work in teams to create one of these events. They’ll likely need some training.

- Pick an issue that is generating a “buzz” in the organization. Quickly develop a map and model that fits on one screen or one flipchart. Don’t search for the truth, just useful insights.

- Keep at it! Rather than using Facilitated Modeling as a one-time event, think about applying it as part of an ongoing organizational dialogue.