The drive toward improvement has become a way of life in corporations today. Total Quality Management, Business Process Reengineering, and other improvement techniques have proven to be powerful tools for enhancing the effectiveness of many organizations. However, designing, executing, and bettering improvement programs is not easy. For every successful effort, there are many more failures. While suggesting new and valuable opportunities for change, process improvement techniques still fall prey to the barriers that limit other organizational change efforts.

To explore why improvement efforts so often fail to produce lasting results, my colleague Nelson Repenning and I, along with other members of the MIT System Dynamics Group, have studied a variety of improvement initiatives in large organizations. We have worked in close partnership with the managers from these firms and gratefully acknowledge their help. And what we’ve found is that many improvement efforts faker because managers mistakenly attribute the source of problems to the people in the system, not to the processes. Over time, managers receive feedback that seems to confirm their initial beliefs. We refer to this process as “superstitious learning,” because people develop strong, but false and often harmful, beliefs.

For example, the electronics division of a major global manufacturing firm carried out two initiatives that led to markedly different results. In the first, management was able to break free from the cycle of superstitious learning to achieve significant, lasting results. In the second, superstitious learning became increasingly entrenched, and the program fell far short of its potential. These examples can serve as lessons for all organizations as we strive for lasting improvement.

The MCT Initiative

The Manufacturing Cycle Time (MCT) initiative focused on the problem of long cycle times in the manufacturing process. A new general manufacturing manager reoriented the focus from labor and machine utilization to cycle time and value-added percentage (the fraction of the cycle time in which a feature or function is added to a part). He didn’t tell people what to do. Instead, he began by visiting plants. He would rummage through the bins of parts, pick one up, and ask why it had been in inventory for so long. And people were motivated to experiment with ways to measure and reduce cycle times.

The initiative was extremely successful. Over several years, the division reduced average cycle time from more than 15 days to less than a day. The quality of finished products improved, and manufacturing became more flexible. Revenue, profit, and cash flow all increased significantly. The huge reduction of work-in-process (WIP) inventory freed up so much floor space that the company was able to cancel the construction of two planned new plants, with additional savings of hundreds of millions of dollars.

The PDP Initiative

A second project, the Product Development Process (PDP) initiative, was launched in part because of the success of MCT. PDP was designed to improve the productivity of the development process. Implementation differed from the MCT effort; for PDP, a dedicated design team worked two years to benchmark best-in-class electronics producers and capture best practices within the organization.

The team produced a detailed, well-documented development process that focused on getting the engineers to follow procedure and use project management tools. In addition, the design team created the “Bookshelf,” a library of documented designs to enhance modularity and increase learning. A third concept was the “Wall of Innovation.” Engineers were encouraged to experiment with new technologies, but after a certain point in the design process—the Wall of Innovation—an engineer could go forward only with technologies that had been proven and posted to the Bookshelf.

Overall, PDP produced mixed results. The company estimates that it achieved about 80 percent of its goal of switching from traditional design tools to CAD/CAE/CAM tools. But the initiative was only 20 percent successful on its other objectives: the use of project management tools, the Bookshelf, and the Wall of Innovation.

Reducing Process Problems

These two examples present a paradox. The MCT effort was launched by a lower-level executive with almost no budget, a four-person staff, and no significant benchmarking. Yet it was highly successful. In the case of the PDP project, top management supported the effort, a cross-functional team designed the new processes, there was extensive benchmarking, and the design main had plenty of resources for training and internal marketing. Yet the initiative didn’t produce lasting results.

To understand this paradox and, more generally, why so many improvement programs don’t work, we developed a set of system dynamics models. The models can be tailored to specific settings such as manufacturing or product development.

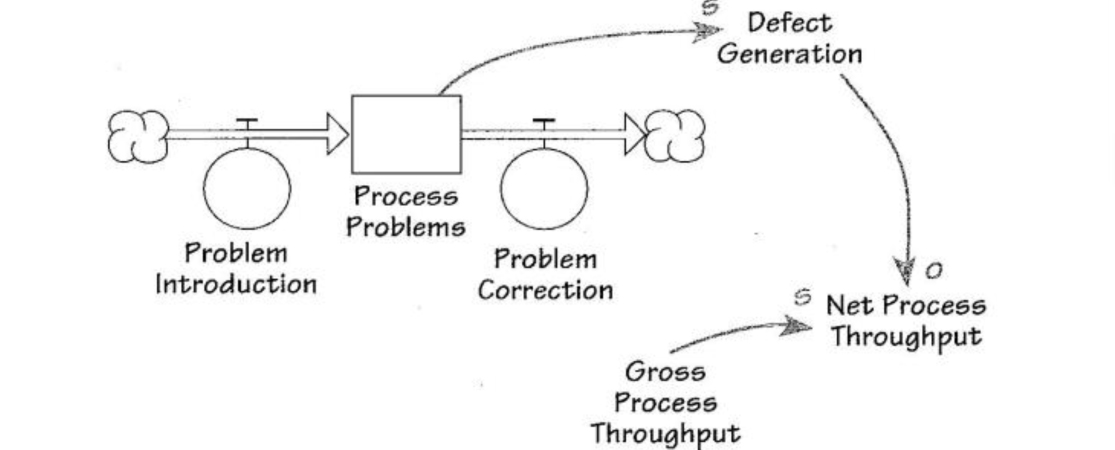

A key concern in any process is the adequacy of net throughput, that is, the number of usable widgets, whether circuit boards or engineering drawings, that are produced by the process relative to the rate required (see “The Stocks and Flows of Defects and Process Problems” on p. 3). In general, when faced with a shortage of usable widgets, workers and managers have only two options: work harder or work smarter. Working harder involves expanding capacity, utilizing existing capacity more intensely, or reworking defective output. Each of these options boosts throughput, but only at a significant and recurring cost. The fundamental insight of the quality movement is that it is more effective to work smarter by eliminating the process problems that generate defects. Eliminating process problems, however, requires managers to train workers in improvement techniques and to provide them with time off from their normal duties. Most important, managers must give workers the freedom to experiment with new solutions and new ideas, knowing that many of these won’t work (see “Work Harder or Work Smarter?” on p. 3).

What Prevents Us from Working Smarter?

Clearly, the high leverage point for improvement is reducing process problems by working smarter—not working harder to compensate for low productivity. Eliminating process problems decreases the generation of new defects once and for all and frees up resources for investment in learning, creating a virtuous cycle of continuous improvement.

But even when we understand the leverage in working smarter, we seldom do it. There are several reasons. First, defects are easier to see and more tangible than process problems. In a manufacturing setting, for example, the defective products sit in full view on the factory floor. In contrast, process problems are often invisible. They exist in relationships, interactions, and activities.

Second, process improvement takes time, whereas defects are usually easily identified and can be quickly repaired or remade. Process improvement is also less certain than fixing defects. It requires a degree of faith. A competence in improvement has to be “grown” organically through improvisation and experimentation, but many people arc averse to uncertainty and ambiguity. As a result, we are tempted to work around the process problems and just fix the defects.

Finally our companies are conditioned to reward the heroes who put out the fires, not the folks who keep a crisis from occurring in the first place. As one interviewee in our study said, “Nobody ever gets credit for fixing problems that never happened.”

Biased Attributions

But there’s another, more insidious reason that we’re often unable to work smarter even when we know it’s the right thing to do. Suppose you’re a manager faced with inadequate throughput. You can solve the problem by having people work harder or work smarter. To decide how to proceed, you have to make a judgment about the cause of the low throughput. If you believe the system is generating defects because of process problems, then you’ll likely focus on working smarter. On the other hand, if you think that your workers are lazy or undisciplined, you’ll push them to work harder.

How do these judgments play out in the improvement setting? We see an operator at a machine, he or she performs an operation, and a defective widget pops out. Was the process or the operator at fault? Process problems are usually distant in space and time from the defects they create. They’re often not obvious. And the delay between the process problem and defect detection is long, variable, and often unobservable. Thus, it’s easy to conclude that the cause of low throughput is inadequate worker effort or insufficient discipline, rather than process problems. This attribution of a problem to the individuals in the system rather than to the system itself is so frequent and pervasive that psychologists have come to call it the “fundamental attribution error.”

Based on this misattribution, managers commonly increase production pressure to get more out of their workers. But while production pressure boosts throughput in the short run, it also has an undesirable long-term side effect. Workers under greater pressure to boost production have less time to spend on improvement and learning. They are less willing to conduct experiments that might temporarily reduce throughput. With less effort dedicated to improvement, fewer process problems are corrected. Defects increase. Throughput falls, and managers are forced to increase production pressure even more, creating a vicious cycle.

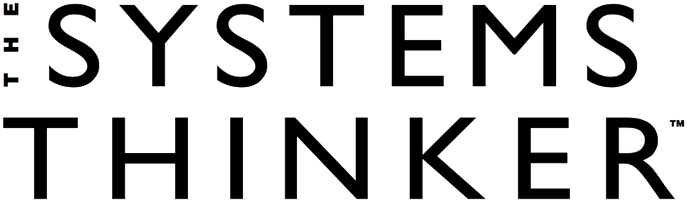

The Stocks and Flows of Defects and Process Problems

Net throughput of any process can be increased either by boosting gross throughput or by reducing the rate of defect generation. Defects arise from the stock of underlying process problems.

Work Harder or Work Smarter?

Managers have two options for increasing net throughput to meet the goal. They can Work Harder (B1) by adding capacity, increasing the work week pushing the work force to work harder, and so on. The other option is Work Smarter (B2) by training employees in improvement techniques and undertaking experimentation.